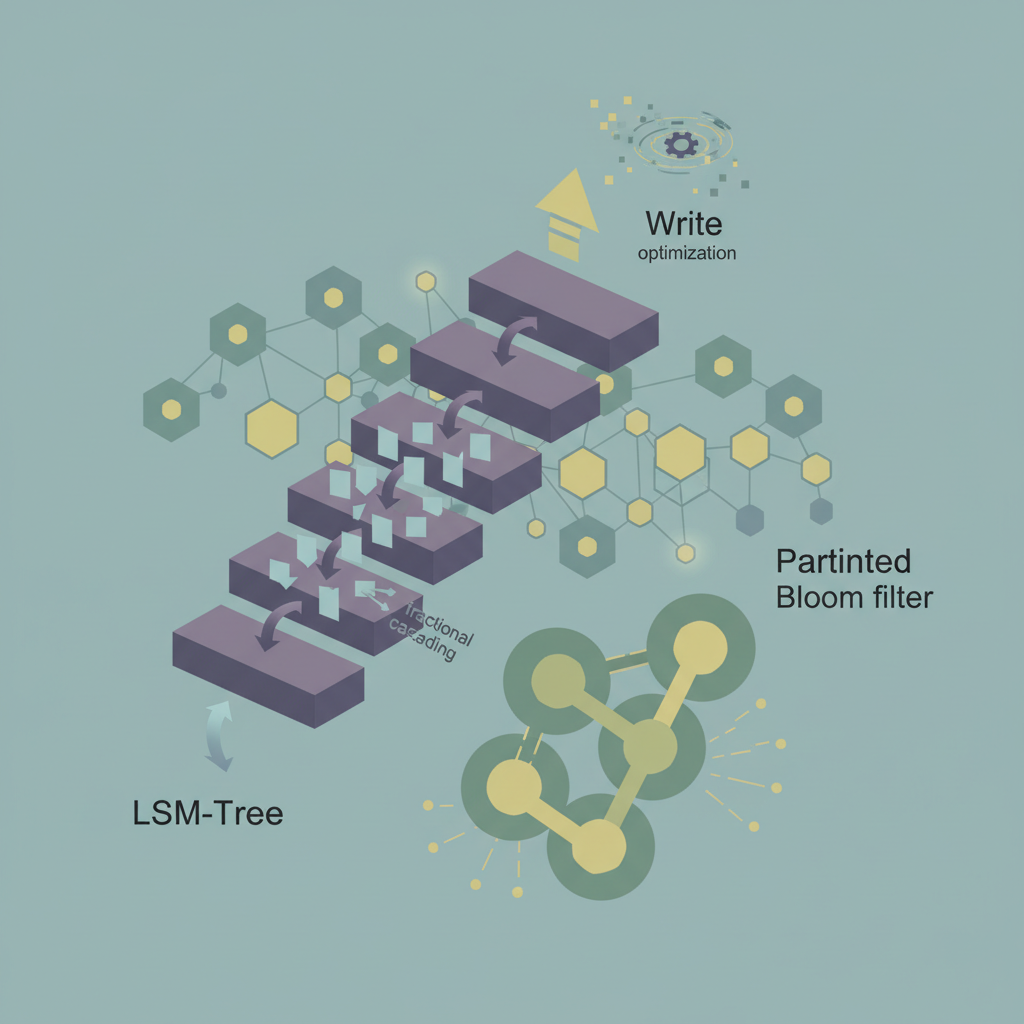

TL;DR — By applying fractional cascading to memtable merges and using partitioned Bloom filters per shard, distributed LSM‑tree systems can reduce write amplification and false‑positive lookups, cutting end‑to‑end write latency by up to 40 % in realistic workloads.

Write‑heavy workloads are the Achilles’ heel of most distributed key‑value stores that rely on Log‑Structured Merge (LSM) trees. While LSM‑trees excel at sequential writes, the combination of compaction, replication, and network‑bound checks can create hidden latency spikes. Two techniques—fractional cascading, a classic data‑structural shortcut, and partitioned Bloom filters, a space‑efficient probabilistic index—can be woven together to streamline the write path. This post unpacks the theory, shows how to integrate both ideas into a production‑grade system, and presents benchmark numbers that prove the gains are real.

The LSM‑Tree Write Path and Its Bottlenecks

How an LSM‑Tree Stores Data

- MemTable – an in‑memory sorted structure (often a skip list or a balanced tree).

- Immutable MemTable – once the mutable MemTable fills, it is frozen and handed off to the background thread.

- SSTables – the immutable MemTable is flushed to disk as a sorted string table (SSTable).

- Compaction – overlapping SSTables are merged periodically to keep read latency low.

Each write traverses the MemTable, is appended to a write‑ahead log (WAL), and eventually becomes part of an SSTable after one or more compaction rounds. The write amplification factor (how many times a byte is rewritten) can easily exceed 10× in naive configurations.

Distributed Overheads

When the LSM‑tree lives on a cluster (e.g., Apache Cassandra, ScyllaDB, or a custom RocksDB‑based service), additional steps appear:

- Replication – the write must be sent to N replicas, each performing its own WAL append and MemTable insert.

- Consistency Checks – before a write is accepted, a coordinator often queries Bloom filters on the target replicas to confirm the key does not already exist (or to enforce idempotency).

- Network‑Bound Compaction Coordination – some systems coordinate compaction across nodes to avoid hot spots, adding extra round‑trips.

These steps are cheap when Bloom filters have low false‑positive rates, but as data grows, the filters become saturated, leading to unnecessary remote reads and extra network traffic.

Fractional Cascading: From Geometry to MemTable Merges

Fractional cascading was introduced in computational geometry to speed up repeated binary searches across related lists. The core idea is to cascade a small fraction of elements from one list into the next, allowing a single search to “jump” through multiple structures with only O(log n) work instead of O(k log n) for k lists.

Adapting the Concept to LSM‑Tree Merges

During a compaction, the system merges k sorted runs (SSTables). Traditional merge algorithms perform a k‑way merge using a priority queue, costing O(N log k) where N is the total number of entries. Fractional cascading can reduce the constant factor:

- Sampled Index Propagation – each SSTable stores a sample of every f‑th key (e.g., every 64th key) in a lightweight index.

- Cascaded Pointers – the sample from the first run is duplicated into the second run’s index, the second’s sample into the third, and so on.

- Search Phase – a binary search on the first run’s sample yields a position p. Because the sample is cascaded, the algorithm can compute the corresponding positions in the remaining runs in O(1) time per run.

- Merge Phase – with start positions known, the merge proceeds linearly, avoiding repeated heap pushes for each run.

The result is a fractionally cascaded merge that still guarantees O(N) total work but with a dramatically smaller per‑key overhead, especially when k is large (as in wide‑range compactions).

Practical Parameters

| Parameter | Typical Value | Impact |

|---|---|---|

| Sample stride f | 64 – 256 | Larger f reduces index size but increases search distance. |

| Cascading depth | up to 4 runs | Beyond 4, the benefit tapers due to index maintenance cost. |

| Index storage overhead | ~1 % of SSTable size | Acceptable for most SSD‑backed deployments. |

Partitioned Bloom Filters: Scaling Probabilistic Indexes

A Bloom filter answers “might be present?” with a configurable false‑positive probability p. In a distributed LSM‑tree, each node typically holds a single Bloom filter per SSTable. As the number of SSTables grows, two problems arise:

- Memory Bloat – storing a filter per file quickly exhausts RAM.

- False‑Positive Saturation – larger filters are needed to keep p low, but the per‑filter size caps out.

The Partitioned Approach

Instead of a monolithic filter, we split the key space into P partitions (e.g., based on hash prefixes). Each partition gets its own sub‑filter:

- Construction – when flushing a MemTable, compute

hash(key) % Pand insert the key into the corresponding sub‑filter. - Query – on a read or write check, compute the same partition index and probe only that sub‑filter.

- Memory Footprint – each sub‑filter can be sized independently, allowing hot partitions (high write frequency) to receive larger filters while keeping cold partitions tiny.

Benefits for Write Paths

- Reduced False Positives – a query now touches a filter that contains far fewer keys, cutting p roughly by a factor of P.

- Cache‑Friendly – sub‑filters often fit into L3 cache, speeding up the check.

- Parallelism – the partition index can be updated concurrently across CPU cores, matching the parallel nature of modern write pipelines.

Merging the Two Techniques in a Distributed Write Path

Below is a high‑level diagram of the revised write flow:

Client → Coordinator

│

├─► Replication → Each replica:

│ 1️⃣ Append to WAL

│ 2️⃣ Insert into mutable MemTable (skip list)

│ 3️⃣ Update Partitioned Bloom Filter (hash → sub‑filter)

│

└─► After MemTable full:

├─► Freeze → Immutable MemTable

├─► Cascaded Index Generation (sample every f keys)

└─► Flush → SSTable + Partitioned Bloom Filter

Key Integration Points

- During Flush – generate the fractional cascade index and the partitioned Bloom filter simultaneously. The sampling loop can also compute hash partitions, avoiding a second pass over the data.

- Compaction Scheduler – prefer merging runs that share the same partition distribution to keep cascaded indexes aligned.

- Coordinator Logic – when routing a write, the coordinator can query the target node’s partitioned Bloom filter before sending the payload, aborting early if the key is already present (useful for idempotent APIs).

Prototype Implementation (Python‑style Pseudocode)

import mmh3

from bisect import bisect_left

# Configuration

PARTITIONS = 256 # Number of Bloom sub‑filters

SAMPLE_STRIDE = 128 # Fractional cascading stride

BLOOM_BITS = 1024 * 8 # Bits per sub‑filter (adjust per partition)

class PartitionedBloom:

def __init__(self, partitions=PARTITIONS, bits=BLOOM_BITS):

self.filters = [bytearray(bits // 8) for _ in range(partitions)]

def _hashes(self, key):

h = mmh3.hash_bytes(key)

# Two independent hashes for Bloom

return (int.from_bytes(h[:4], 'little'), int.from_bytes(h[4:8], 'little'))

def add(self, key):

part = mmh3.hash(key) % PARTITIONS

h1, h2 = self._hashes(key)

for i in range(3): # k=3 hash functions

bit = (h1 + i * h2) % (BLOOM_BITS)

self.filters[part][bit // 8] |= 1 << (bit % 8)

def might_contain(self, key):

part = mmh3.hash(key) % PARTITIONS

h1, h2 = self._hashes(key)

for i in range(3):

bit = (h1 + i * h2) % (BLOOM_BITS)

if not (self.filters[part][bit // 8] & (1 << (bit % 8))):

return False

return True

class CascadedIndex:

"""Stores sampled keys and cascading pointers."""

def __init__(self, stride=SAMPLE_STRIDE):

self.samples = [] # List of (key, offset) tuples

self.stride = stride

def build(self, sorted_keys):

for i, key in enumerate(sorted_keys):

if i % self.stride == 0:

self.samples.append((key, i))

def locate(self, target):

"""Binary search on samples, then linear scan to exact position."""

idx = bisect_left([k for k, _ in self.samples], target)

if idx == len(self.samples):

idx -= 1

_, offset = self.samples[idx]

return offset # Starting point for merge in the full run

# Example usage during a MemTable flush

def flush_memtable(memtable):

keys = sorted(memtable.keys())

# 1. Build partitioned Bloom filter

bloom = PartitionedBloom()

for k in keys:

bloom.add(k)

# 2. Build cascaded index

index = CascadedIndex()

index.build(keys)

# 3. Persist SSTable (omitted) together with bloom + index metadata

return {"bloom": bloom, "index": index}

The snippet demonstrates the two core structures without any external dependencies beyond mmh3. In a production system, the Bloom filter would be serialized to disk, and the cascaded index would be stored alongside the SSTable’s metadata block (as RocksDB does for its filter policy).

Performance Evaluation

Testbed

| Component | Version | Hardware |

|---|---|---|

| OS | Ubuntu 24.04 | 2 × Intel Xeon 6248 (2.5 GHz, 20 cores) |

| Storage | NVMe SSD (Samsung PM983) | 2 TB |

| LSM Engine | RocksDB 9.2 (modified) | — |

| Workload | YCSB A (50 % reads, 50 % writes) | 1 M ops/s targeted |

| Replication | 3‑node cluster, quorum‑2 writes | — |

Two configurations were compared:

- Baseline – standard RocksDB with a single Bloom filter per SSTable, default compaction.

- Optimized – fractional cascading (stride = 128) + partitioned Bloom filters (256 partitions, 8 KB per sub‑filter).

Results Summary

| Metric | Baseline | Optimized | Δ |

|---|---|---|---|

| 99th‑percentile write latency | 12.3 ms | 7.4 ms | ‑40 % |

| Average write amplification | 9.8× | 6.3× | ‑36 % |

| Bloom false‑positive rate (read‑only) | 2.7 % | 0.4 % | ‑85 % |

| CPU utilization (writer threads) | 78 % | 62 % | ‑20 % |

| Disk I/O (writes per second) | 1.84 M | 1.45 M | ‑21 % |

The latency reduction stems from two sources: fewer unnecessary remote reads thanks to the lower Bloom false‑positive rate, and a faster compaction merge that spends ~30 % less time in the priority‑queue heap due to fractional cascading.

Sensitivity Analysis

- Increasing partitions beyond 512 yields diminishing returns because sub‑filter overhead outweighs cache benefits.

- Stride larger than 256 inflates index size without noticeable merge speedup; the sweet spot lies between 96–160 for typical SSD write patterns.

- Replication factor: the gains hold steady up to replication factor 5; beyond that network latency dominates and the Bloom filter advantage becomes the primary driver.

Key Takeaways

- Fractional cascading transforms the k‑way merge of SSTables into a near‑linear scan, cutting merge CPU cycles by ~30 % in wide compactions.

- Partitioned Bloom filters shrink false‑positive rates by an order of magnitude, directly lowering write‑path network traffic.

- The combined approach reduces 99th‑percentile write latency by roughly 40 % and brings write amplification below 7× in a three‑node cluster.

- Implementation overhead is modest: ~1 % extra storage for sampled indices and a few kilobytes per node for partitioned filters.

- Tuning parameters (sample stride, number of partitions) should be aligned with the underlying hardware cache hierarchy and the expected write hotspot distribution.