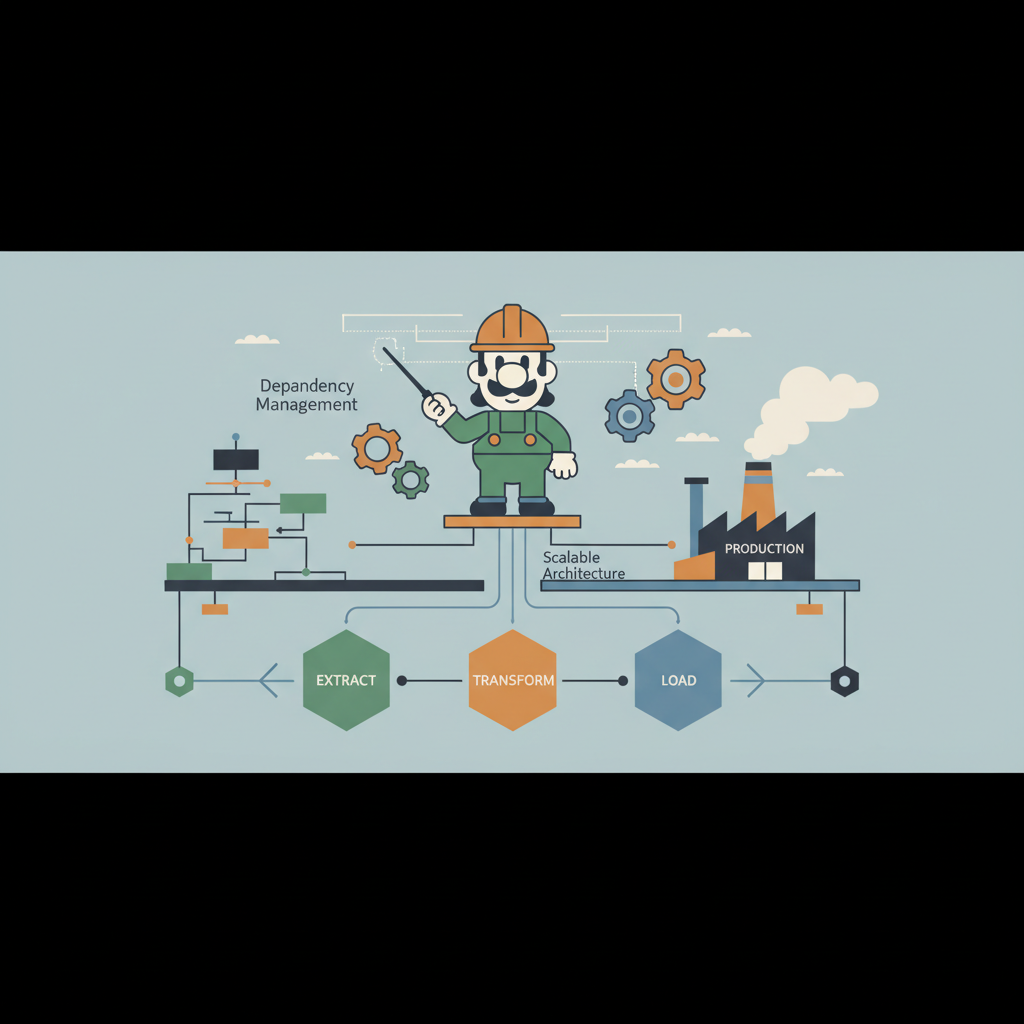

Scaling Luigi for Enterprise Workflows: Architecting High-Throughput Data Pipeline Orchestration for Production-Ready Systems

An in‑depth guide for engineers on turning Luigi into a robust, scalable orchestrator for massive production workloads, covering architecture, scaling tricks, and monitoring.