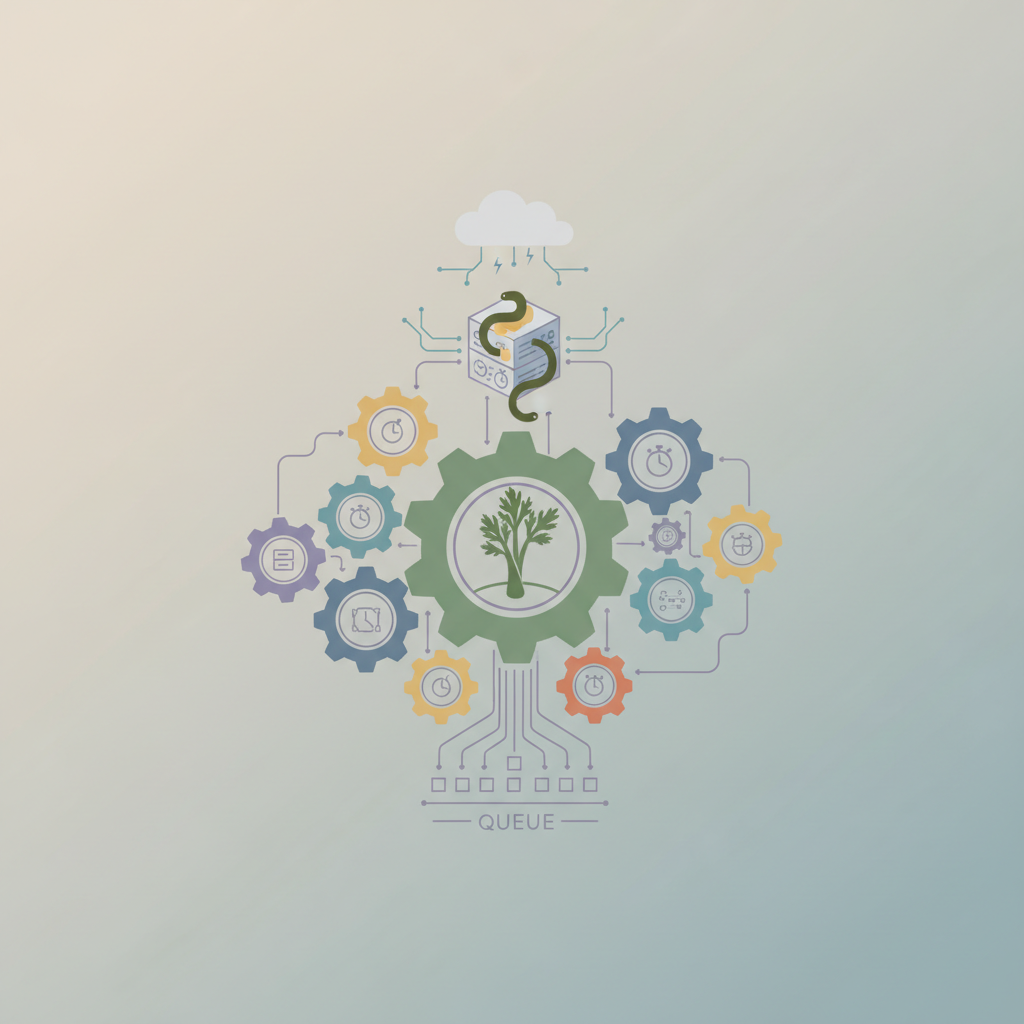

Architecting Distributed Task Queues with Celery: A Deep Dive into High-Performance Python Applications

Learn production‑ready architectures for Celery, from broker choices to worker tuning, and get actionable tips to keep your Python job pipeline fast and reliable.