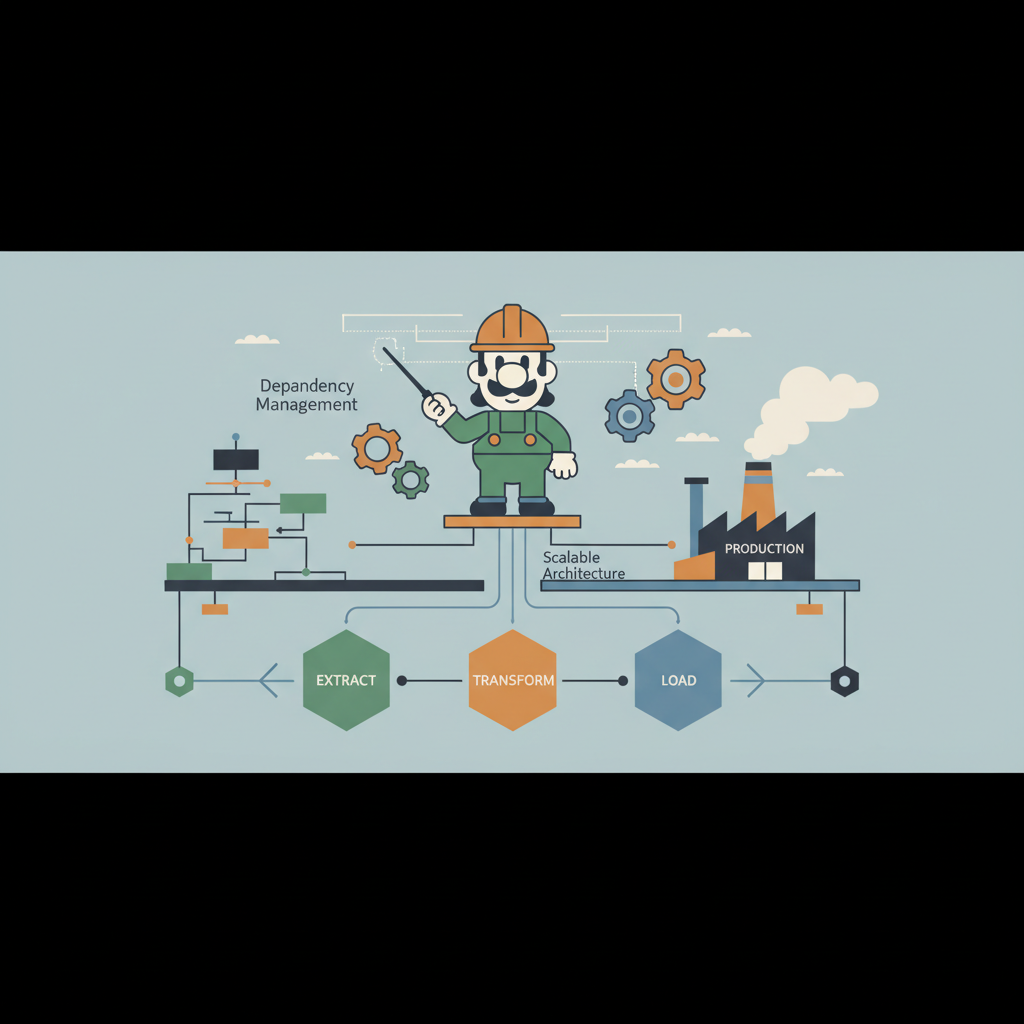

Architecting a Software Factory: Building Scalable Development Engines with Structured Agent Workflows

A deep dive into building a production‑ready software factory, outlining architecture, agent orchestration, and scaling strategies for modern engineering teams.