Introduction Edge computing is reshaping how data‑intensive applications respond to latency, bandwidth, and privacy constraints. By moving compute resources closer to the data source—whether a sensor, smartphone, or autonomous vehicle—organizations can achieve real‑time insights while reducing the load on central clouds.

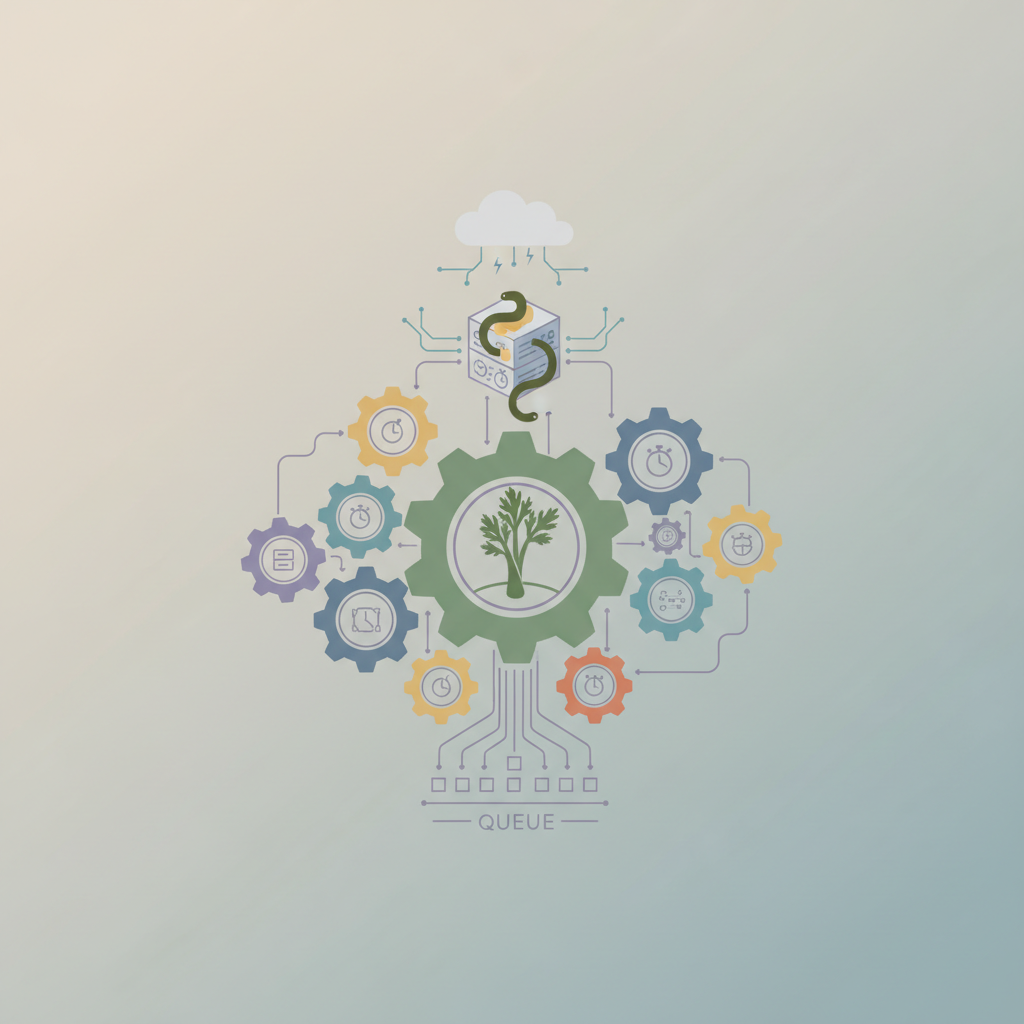

A common pattern in edge workloads is the asynchronous agent: a lightweight process that reacts to events, performs computation, and often delegates longer‑running work to a downstream system. As the number of agents grows, coordinating their work becomes a non‑trivial problem. Distributed task queues provide a robust abstraction for decoupling producers (the agents) from consumers (workers), handling retries, back‑pressure, and load balancing across a heterogeneous edge fleet.

...